Raimo Seero, CTO of Uptime

The U.S. military is already using it and wants to use it even more. The company that built it is worried that this may not be the right thing. So what exactly is Claude – the software everyone has suddenly started talking about?

When the U.S. Department of Defence begins demanding access to an AI tool, and the company behind it publicly says it is concerned about that kind of use, it is worth asking what exactly is going on with this technology.

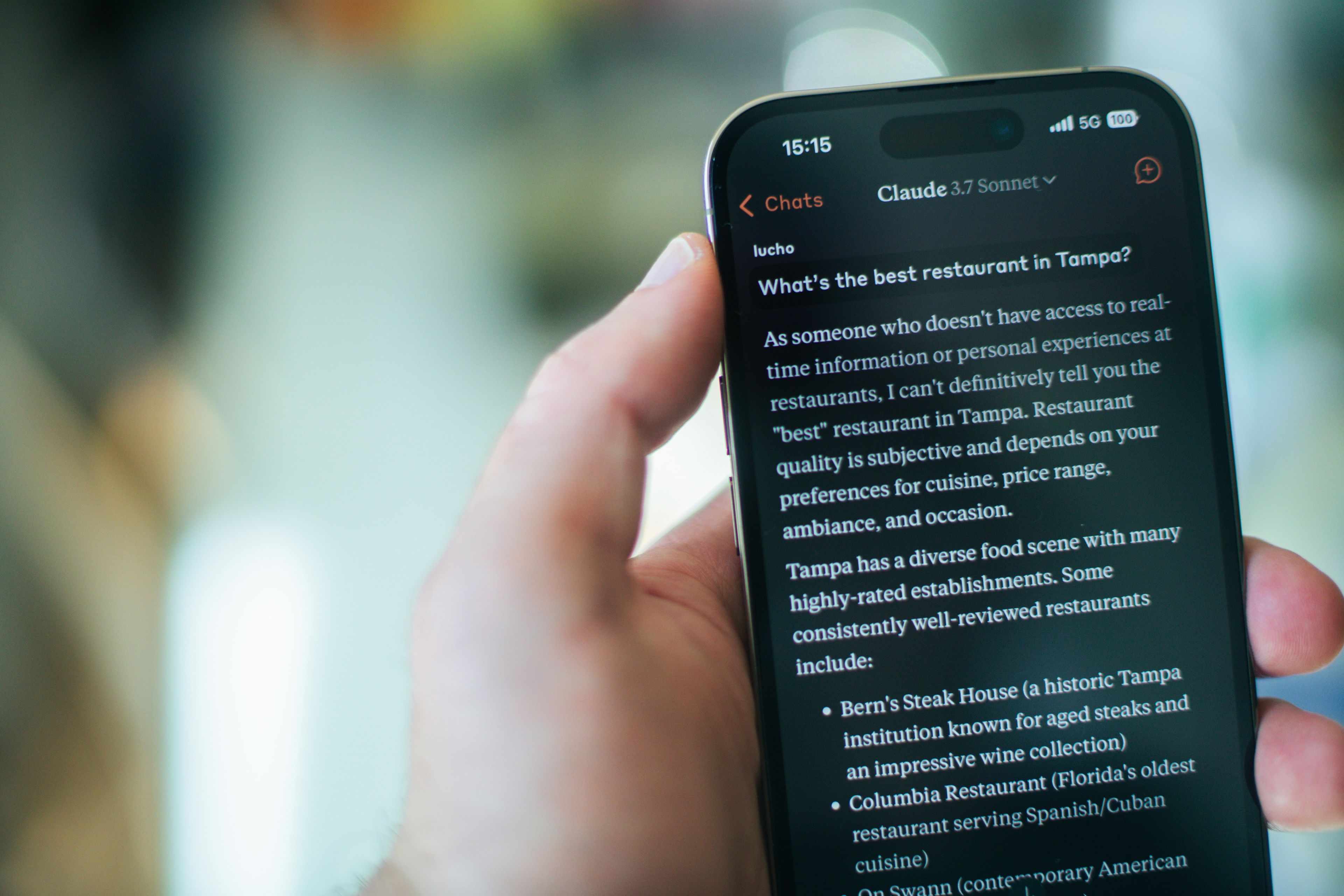

That tool is Claude. It was created by Anthropic, a San Francisco-based company that may not be as widely known as OpenAI or Google, but whose product reputation has made an unexpected leap over the past year. Among developers, Claude has reached the point where it is being called a partner rather than just a tool.

It does less, but it does it well

What mainly sets Claude apart from other software is what it does not do. It does not generate videos or images, and it does not imitate the human voice. While ChatGPT tries to cover as broad a range of use cases as possible, Claude focuses on text, code, analysis, and problem-solving. That leads to higher-quality results in the areas where AI is truly needed.

The difference also lies in how Claude works. Most AI tools are built to respond quickly; Claude is built to understand the task and the problem first. It establishes context before offering a solution. If a question is unclear, it asks for clarification and reflects the issue back to make sure it is understood correctly. In technical work, that means less noise and more relevant answers.

Claude’s behaviour can also be guided simply by describing what you expect and asking it to respond differently next time. There is no need for complex prompts or separate configurations. This flexibility, combined with how easy it is to use, makes it an excellent tool for day-to-day work.

Claude was also one of the first to suggest a new way of using AI: not as a source of answers, but as a workspace. In that model, work no longer starts in a file system or browser, but in Claude itself — a place where projects, recurring tasks, and analyses come together, and where it can support you continuously.

More recently, Claude introduced CoWork, which makes it possible to delegate tasks to Claude much like you would to a colleague. It prepares materials so that you can continue working in the morning. Beyond simply speeding things up, this also changes how work is divided, and some tasks can now genuinely be delegated to AI.

Too much initiative can be a risk

But the boundaries have to be set by the user. Claude is optimised to achieve its goal, and if the direct route is blocked, it looks for alternatives. That drive, which makes it such a strong problem-solver, can, in an uncontrolled environment, create situations where it exceeds permitted limits or deletes something it should not.

This brings us back to the Pentagon. Anthropic itself has said that military use raises ethical concerns for the company. A company that builds a highly effective tool while also worrying about its consequences is still an unusual sight in Silicon Valley.

In practical terms, this means one thing in the workplace: make sure from the outset that your settings clearly define what Claude can access and what it is allowed to do. When those boundaries are in place, it is probably the best AI tool currently available.