Software architectures face a constant challenge of creating robust and resilient software solutions. The fundamental questions are the following:

1) What is a good software architecture?

2) How to build one effectively?

3) What are the key factors to consider during the early stages of development.

While there are no definitive answers to those questions nor concrete actions to use to build efficient design systems, some software architects manage to succeed in this endeavour while others fall short.

What is residuality theory?

Residuality theory is a new revolutionary concept proposing an ideology for designing software systems that emphasises addressing not only the intended purpose of the software but also anticipating and resolving future problems. According to Barry M O’Reilly, the author of the residuality theory, software systems should not be complex and static, but rather be able to change under stress. By considering systems as a set of residues associated with stressors, we can better comprehend how the design decisions affect the life cycle of software systems and their unpredictable complexity.

Barry M O’Reilly:

“We can’t predict the future. But we can impact the residue. We as designers can decide how the piece can fall apart.”

According to Tanel Hiob, software architect at Uptime, residuality theory is essentially complexity theory adapted to software systems. The core principle is that by solving a set of random problems, it is likely that many other problems that may arise in the future have also been addressed. This includes unexpected events or situations that the developer had not foreseen. The goal is to develop software that can adapt to problems that could not have been predicted in advance.

Residuality theory analysis

According to the residuality theory we have to create a system that is able to cope with the environment we put it in. But how to validate the effectiveness of a software architecture based on this concept? Architects can rely on the following analysis path:

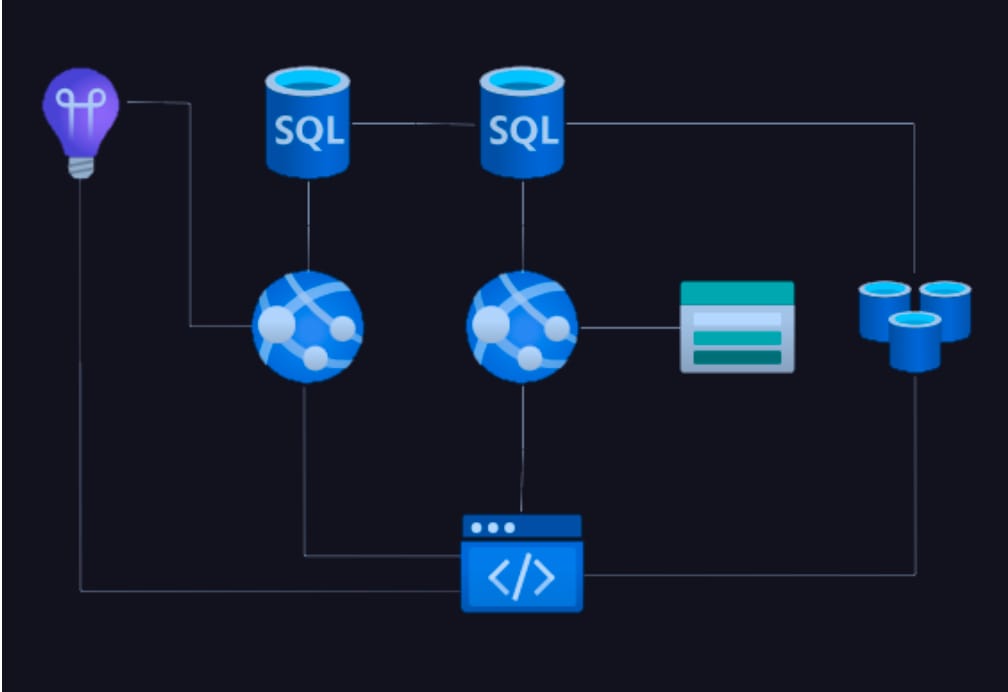

1. Design a naive architecture

Build a random simulation followed by a network analysis. Residuality theory not only makes these two steps explicit, but also amplifies, thus can improve the system’s ability to withstand unknown stressors and increase quality.

2. Stressor analysis

Firstly, identify and analyse random stressors that the software system could encounter in its intended environment. Design decisions should be driven by the stressors.

Secondly, determine the residual behaviours that the software system should exhibit in response to these stressors. Detecting these behaviours should enable the system to remain functional, perform optimally, and recover from possible disruptions.

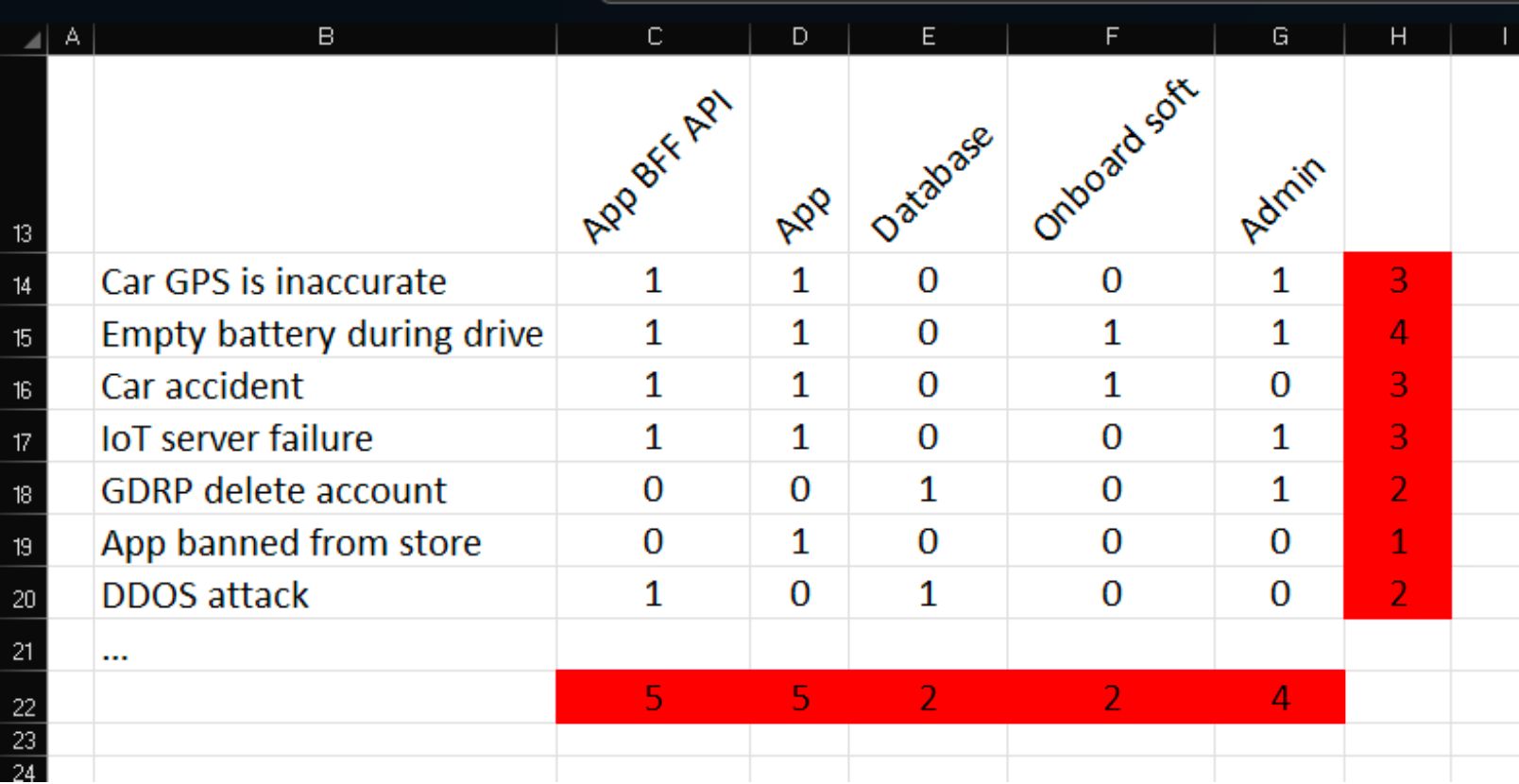

3. Incidence matrices

The incidence matrices serve as a tool for analysing the impact of residuality theory on various components within a software system. The matrix is used to map all the stressors against residues, processes, flows, and software components. It helps software engineers to identify the most impactful stressors and vulnerable components. The latter can be grouped together based on similarity – hyperliminal coupling – even if there is no inherent connection between two components, through the business specification, this connection still exists.

The incident matrix provides an overview of how different stressors affect the system. The matrix includes the components of the system and as many stressors as identified in the previous steps. It’s important to simply mark which stressors directly affect which components and then add up the values in rows and columns. When examining the final result, we can start drawing new conclusions about the system.

If a particular stressor affects many components, it indicates the system’s vulnerability to that specific stressor. In such cases, it is advisable to reconsider the system and explore ways to better withstand the stressor. One approach is to introduce something that takes the impact, thus protecting the integrity and functionality of the system.

If a component is impacted by a large number of stressors, it suggests excessive vulnerability of that component. These components can be parallelized or simply reinforced to make them less susceptible.

Sometimes, multiple seemingly unrelated components are affected by the same stressor. This means that we have discovered an invisible hyperliminal coupling, which can be challenging for developers to notice. Once this connection is identified, it’s important to know how changes may affect other related components. If both components are affected by exactly the same stressors, it actually implies that the components are identical. In this way, dispersed monoliths become distinct from microservices architecture. However, it’s essential to note situations where multiple components are vulnerable to almost the same stressors. While an architect’s intuition may suggest that these components can be combined, it’s necessary to remain cautious. Excessive merging can increase complexity of the system and make fault detection and troubleshooting more challenging.

Ideally, all numbers in the matrix should be less than three, as this would reduce the number of connections between components and simplify system management. However, in practice, this may not always be possible, but it’s still a goal worth considering.

Empirical proof

Empirical proof is essential in the transition from intuition to engineering. To validate the design process based on the residuality theory, it is necessary to gain real-world evidence to support the initial ideas and hypotheses.

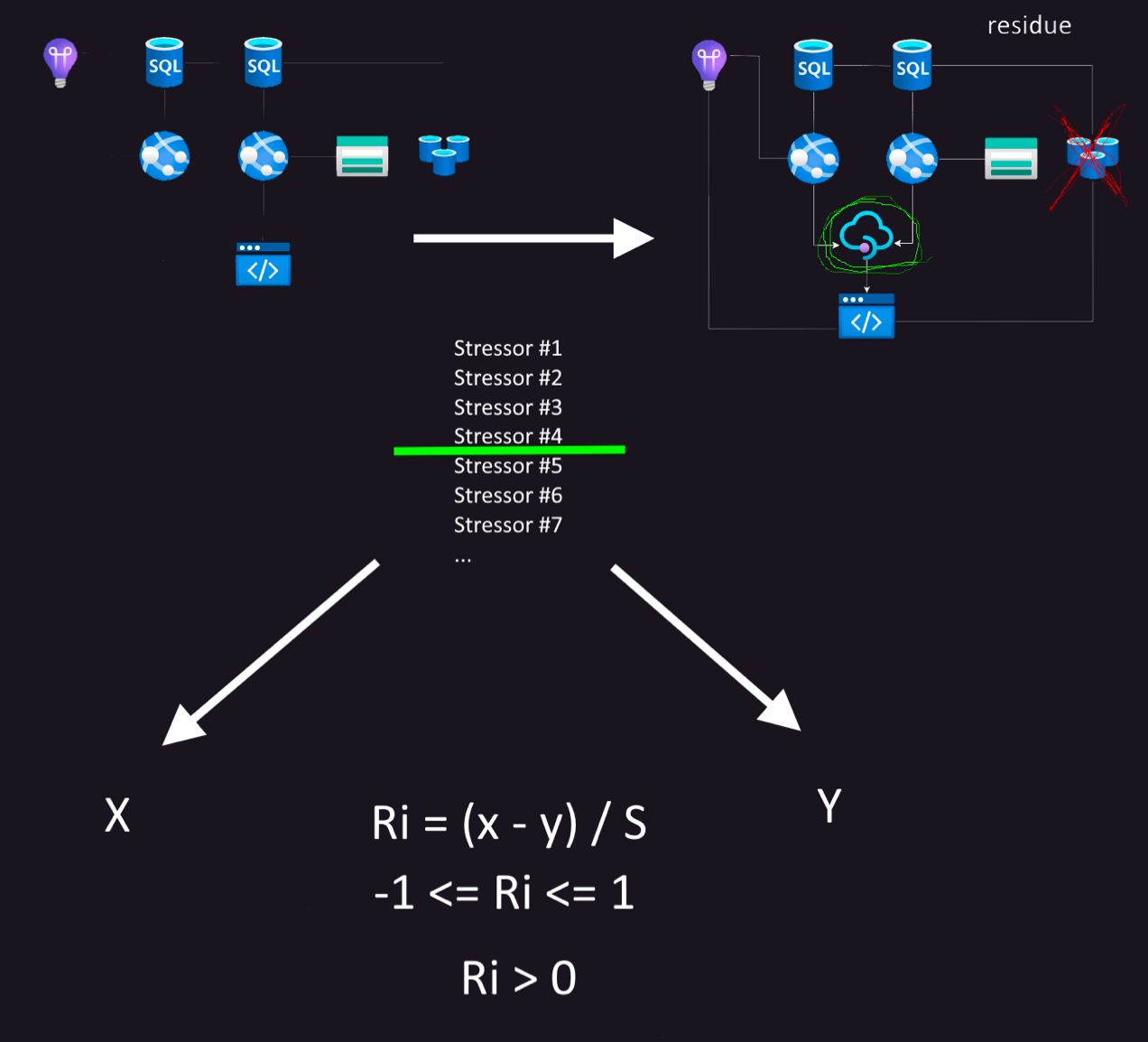

The testing involves dividing the stressors into a training set and testing set. When building a new system using training stressors, the testing phase involves comparing it to the original architecture, meaning that it assesses which architecture better withstands testing stressors.

This allows the calculation of the residual index Ri. When Ri is greater than 0, the test has been successful and the system is likely to survive any future disruptions.

S = total number of stressors

Y = number of stressors survived by the naive architecture

X = number of stressors survived by the new, residual architecture

This allows the calculation of the residual index Ri. When Ri is greater than 0, the test has been successful and the system is likely to survive any future disruptions.

Putting residuality theory into practice

Residuality theory, although not widely recognized, can be an intriguing framework to explore in practical applications.

Raimo Seero says that subconsciously people have thought in a similar manner before, but it has never been done so systematically. He adds that the irony of residuality theory is that when everything is done correctly, you hardly notice that something would be very different. In the final stages of development, there are simply fewer problems, and everyone can proceed with their work more easily.

In hindsight, Seero and his team have analysed several developments. One of them involved the locking of car doors in the context of a car rental company project. Specifically, when the command to lock the doors was sent to the car, a successful response did not always mean that the doors were physically locked. Some components could have failed in the meantime. The initial versions of the app tried to be cautious in terms of user experience (UX) and did not allow door locking if the system believed that the doors were already locked. However, it was quickly realised that it is better to always allow door locking from the app. If such systematic analysis had been done right from the beginning, perhaps such a development issue could have been avoided.

Another example concerns a retail company campaign project that involved replacing the logic for managing previous campaigns through multiple Uptime and partner systems. Although the client was initially sceptical about the project’s time commitment, several unforeseen issues emerged in the final stages of the project. Fortunately, due to the foresight of the team, these issues were quickly identified and easily resolved. Otherwise, they would have faced many unpleasant surprises.

Systematic approach for software development

In conclusion, residuality theory offers a systematic approach for software architects and engineers to proactively manage risks in the design phase, thus creating a sustainable software architecture in the face of evolving requirements. By considering best practices, conducting analysis and empirical testing, architects can refine their designs and ensure the longevity and effectiveness of their software systems.